If you’ve ever struggled to get footage from two very different looking cameras to match then this article is for you.

Of all the colour grading challenges there are, getting two distinctly different cameras to look the same can be exceptionally hard, especially as the characteristics of the lenses, sensors and compression algorithms vary from camera to camera and are essentially a ‘secret sauce’ for each manufacturer.

But fear not, help is at hand! And in this sponsored post we’ll take a look at what you can do to make your footage look the way it should, regardless of what happened on set, and in a way that will save you time and effort.

That help comes in the form of CineMatch from FilmConvert.

CineMatch takes the guesswork out of matching the underlying colour science of each of your cameras and can transpose one set of colour data to match the sensor characteristics and colour science of your main camera.

This is obviously super useful when working with footage from a multi-camera shoot that, for whatever reason, they elected not to use two of the same cameras, but it is also exceptionally useful for helping to match the look of stock footage into your current project.

In this article we’ll take a look at:

- The inherent problem of making footage from different cameras match

- The technical explanation of colour science, colour management and CST

- How CineMatch works

- Testing CineMatch – LUTs, False Color and more

- CineMatch – An Editor’s Review

- Tips and Tricks for making the most of CineMatch

Save 10% on CineMatch with the discount code “ELWYN” or download their unlimited free trial here.

Why Footage from Different Cameras Doesn’t Match

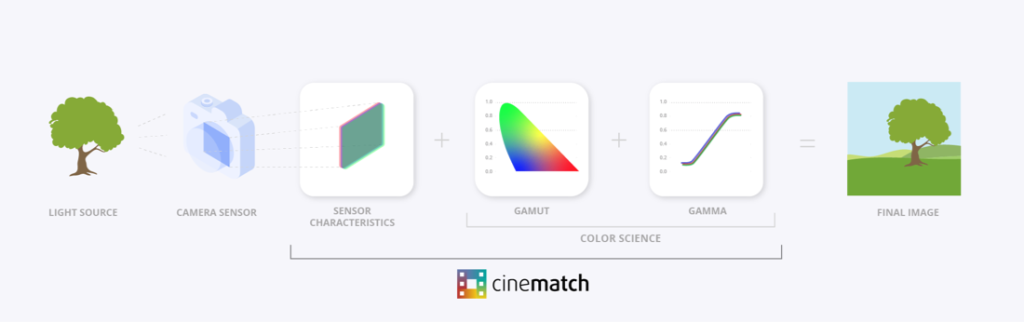

Each camera uses a different sensor and different colour science (the gamut of the colour space and the gamma curve of the encoding) to capture the values of the light it senses and record those within a specific colour space.

So every clip has a colour space to store the data in, and a specific way of fitting that data into that space (colour science).

Sidney Baker Green does a superb job of exploring this topic in the video above.

(FYI his channel is excellent, you should subscribe!)

An important distinction to get into when we’re talking about colour spaces or colour gamuts and camera matching is that colour space does not equal colour science.

Colour science is a sensor characteristic; how a camera is going to interpret those red green and blue primaries within that colour space.

So, for example, you can have a Canon camera that will see a shade of red but a Nikon camera may see that shade of red differently and it still has the ability to hit a certain saturation level within a given colour space but the sensor perceives it differently.

Sidney Baker Green

Understanding Colour Space Transforms

When you’re working with log encoded footage you need to transform the footage from that bigger colour space into your (more limited) display colour space – most often Rec. 709 Gamma 2.4 – so that it ‘looks right’ and not all flat and washed out.

When you have multiple source cameras to work with, making all that footage ‘look right’ means mapping your various input (clip) colour spaces to your singular output (display) colour space.

You can do this in various ways – either clip by clip using Colour Space Transforms (take this input map it to this output for this clip) or through a colour management system which does it at a project setting level.

While systems like ACES or DaVinci Resolve’s Colour Management can do a lot of the heavy lifting of that mapping process for you, and allow you to work more flexibly in a bigger colour space in your timeline – sandwiched in between your input and output spaces, the underlying colour science and sensor characteristics are still different.

So your shots won’t look like they were shot with the same camera, unless you either manually match them by eye (tricky), manually match them using colour charts (if you have them) or use CineMatch (easy).

Why can’t I just use a Colour Space Transform?

If you add a colour space transform to your ARRI clip and tell it to act like Sony footage all you’re really doing ”is applying the colour science from one camera to a sensor it wasn’t designed for.“

Colour Space Transforms (CST) are not enough to make your cameras match each other accurately. A colour space transform just converts the data from one colour space to another, it doesn’t account for the unique characteristics of each sensor that originally captured the footage.

This, however, is exactly what CineMatch does – it accounts for both the profile of the sensor and the colour science.

Colour Management, Colour Spaces and Colour Space Transforms Explained

If you don’t know as much as you’d like to about colour science then watch these trio of videos!

Colorist Darren Mostyn does a great job of explaining DaVinci Resolve’s Colour Management (and the principles of any colour management), what colour spaces are and how to use Colour Space Transforms, in accessible yet sufficient detail in these three videos:

- DaVinci Resolve Colour Management for Beginners – Start here!

- Colour Space Transforms Explained (and when to use them)

- Colour Space Aware Grading Tools and Timeline Colour Spaces

How FilmConvert’s CineMatch Works

CineMatch is a clever colour matching system that was built on the hard work of profiling over 70 different cameras to reverse engineer the details of the inherent characteristics of each camera’s sensor, and all of the unique colour science that makes up the way that camera captures its images.

CineMatch is then able to transpose the differences between one camera’s profile to another, enabling them to accurately match each other.

As explained above, it is not enough to just know the colour science (which colour space and gamma curve) the encoding is using, you also need to understand the differences between the sensors too.

The sensor match step plus the colour science transform is what makes CineMatch the most accurate camera matching workflow available.

Cinematch

CineMatch currently supports cameras from

- ARRI

- Blackmagic Design

- Canon

- DJI

- Fujifilm

- GoPro

- Nikon

- Olympus

- Panasonic

- RED

- Sigma fp

- Sony

- Z Cam

You can also request cameras for CineMatch to profile next.

It’s available as a plugin on Mac and Windows for Adobe Premiere Pro, Blackmagic Design DaVinci Resolve and Apple’s Final Cut Pro.

You can buy each of these plugins separately for $125 but you can also buy a bundle of all three plugins for $174. Which is a no-brainer for easily moving between apps and saving you over $200!

Why should you buy CineMatch?

If all of the technical details and nuances of matching different shots by eye has become too troublesome, time-consuming or unsatisfactory then CineMatch can save you a ton of time, effort and frustration by getting everything to a solid starting point for you, in just a handful of clicks.

You can also use it for matching skin tones, stock footage and client references in a jiffy.

It’s easy to use, you’ll save time and get better results.

I’ll get into how to do all this in just a minute…

Why you Must Ask for Metadata

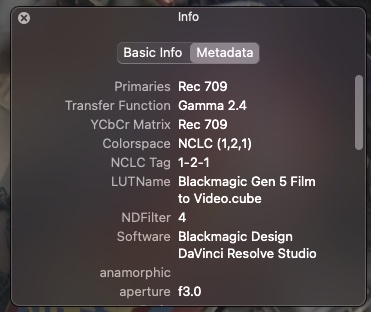

One thing to highlight here is that to get the best result from CineMatch, for each footage source you will need to know:

- The camera manufacturer

- Camera model

- Shooting profile

- Video level (full limited thing).

This can be tricky to get hold of if you’re using stock footage (more on this later) as a lot of sites don’t include this information in the download.

Sometimes this information is tricky to get out of the client or producer although it should be easy to source from the camera person or DIT – if you have their contact details.

I asked around online to see if anyone knew of a way to read this kind of metadata (camera, shooting profile etc) from a video file and if there were any great apps to solve this problem.

From my own research there are a few free/cheap contenders that might help you, and a couple of paid options which both look like worthy champions.

Free/Cheap Video Metadata Readers

- VLC from Videolan

- Media Info from Media Area

- EXIF Tool Reader from MSAM Media (Mac GUI for EXIF reader)

- Baselight Look (free version of Baselight)

Of these 3 tools, the free EXIF Tool Reader delivered the most informative results in my testing.

FYI – When using the these tools the NCLC tag of 1-1-1 represents Rec. 709.

There’s an interesting discussion of the 1-2-1 tag here.

Champion Video Metadata Readers

Screen from VideoVillage was recommended by editor and colorist Jamie Le Jeune when I asked the Twittersphere for help in solving this problem.

In his experience it does the best job of reading camera metadata, even compared to some camera brand’s own software tools.

In my own testing using various clips, including some stock footage downloaded from Artlist Max – which gives you access to the camera original files when available – it did the best job of reading every piece of available metadata.

It’s not cheap at $99 for a lifetime license with 1 year of updates ($29/year thereafter) but it is PACKED with a huge array of professional features and, for our purposes, most importantly does an excellent job of reading the kind of metadata we’re looking for (where it is exists!)

Some of Screen’s other professional grade features include:

- Professional minimalist interface

- Supports RAW video (Red, ProRes, BMD)

- Image Sequences

- Timecode

- Crop & Letterbox on the fly

- Pixel Aspect Ratio control

- Metadata Viewer

- Quick Look Plugin (peek at DPX or RLA files)

- Massive range of supported professional codecs

- Colour Management (and by-pass)

- Add LUTs

- View individual channels

- Control Gamma and Exposure Curves

- RGB Sampler

- Take and Export Screenshots

- Customisable batch exports

So it is definitely worth those $99 if all of these features matter to your workflow.

evrExpanse 3

evrExpanse is designed to help editors make the most of their metadata, including creating new master files with enhanced metadata embedded in, but, more importantly for our purposes, it can read and export the key EXIF metadata we’re after to help make plugins like CineMatch work optimally.

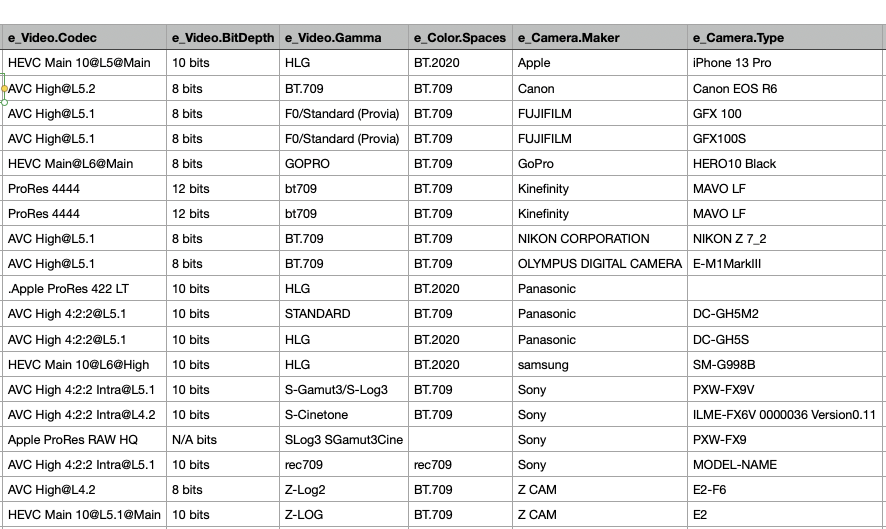

The apps creator, Antonio Marogna, shared this screenshot of the crucial metadata that evrExpanse can read and export. He also emailed to say that as of v3.1

“You can now extract and import the White Balance CC tag into NLEs, which contains custom configurations for the Green-Magenta and Amber-Blue axis that can be set in-camera. This results in a more accurate representation of white balance overall. The supported cameras include Canon EOS, Nikon, and Panasonic, while Z Cam, ARRI, Kinefinity, and others only have values for Amber-Blue.”

Version 3.2.2 also adds a feature to extract Video Level (Data Range) information, which will also help you improve the accuracy of your matching.

evrExpanse currently supports footage created by cameras from Panasonic, Nikon, Sony Alpha, Fujifilm, Canon, Sony XDCAM, Z Cam, Kinefinity, Atomos (Ninja & Inferno), McPRO24fps, GoPro, Apple iPhone, and FiLMIC Pro.

In my testing it correctly identified the shooting profile for a Sony FS7 shot (S-Gamut3.Cine/S-Log3) stock shot. One of the big benefits if you’re probing a lot of shots is that evrExpanse is designed to work with folders of footage and exports a handy CSV itemising the metadata for each file.

You can process one file with the free trial or purchase a professional license for about £55/$65.

There are a few steps to take to install evrExpanse, including pre-installing Python 3 and the ASC MHL component.

Hedge your bets

Jamie also made a great suggestion that if your footage is offloaded on set using an app like Hedge (my favourite) then these metadata details can be noted and stored at that point too.

Testing CineMatch from FilmConvert

Before I dive in any further it’s worth mentioning that you can try CineMatch for yourself thanks to their unlimited, watermarked, free trial, which supports all of their camera packs, so you can test it on the footage you already have.

There is an excellent, detailed and informative workflow guide for each of the plugins here – which is absolutely worth a read, to ensure you’re getting the best out of the software.

CineMatch for Beginners

Applying CineMatch is very straight forward, if you know those three essential pieces of metadata (camera brand, model and shooting profile), which thanks to the apps above, I do for my test clips from Artlist Max.

Drop it onto the clip you want to match and select your source and target camera settings.

All the camera packs come pre-installed so you don’t need to download them individually, as you do with FilmConvert’s Nitrate plugin, which has the advantage of ease of use, but also takes up a bit more space on your system.

In the advanced settings you can toggle the data range of the source and target cameras, although CineMatch will default to the ‘native in-camera setting‘ but you can toggle between Full and Limited here, if you need to adjust your contrast.

Simply following the flow of the CineMatch controls will take you through a sensible order of operations when it comes to colour grading;

- matching the sensors

- making in-camera style adjustments

- refining the match with an HSL qualifier

- and finally, additional secondary controls

How easy is it to match shots?

This kind of depends on how different the shots are, but the process itself, is very easy!

Watch the video above to see how to go about this in practice, but all you have to do is:

- Apply CineMatch to both of your cameras (e.g. iPhone and ARRI Alexa)

- On your Alexa clip set the source camera settings (e.g. ARRI, Alexa Mini, LogC)

- On your iPhone clip set the source camera settings (e.g. iPhone 12 Pro Max, FilMic Log v3)

- On your iPhone clip set the target camera settings (e.g. ARRI, Alexa Mini, LogC)

Once this is established you can then refine your exposure, white balance and HSL settings to further dial in your match.

Get more from your LUT Collection

Another tip, shared by Nick from FilmConvert in the video above, is to use FilmConvert’s sensor matching process to make sure that your source footage is set up to better match with a LUT that you’re applying to it – that may have been created with a specific camera in mind.

So for example, you could match your iPhone footage to the ARRI camera pack and then apply an ARRI-based LUT to your iPhone footage, knowing you’ll get a more accurate final look.

False Colour for Beginners

One of the really useful features in CineMatch is the false colour viewer. If you’ve not really worked with False Colours before, it’s another way of viewing your image to see it from a different perspective, depending on the setting you choose.

CineMatch offers 4 false colour views:

- Middle Grey

- Skintones

- Temperature

- Tint

In the video above, Nick demonstrates how to use each of these false colour tools in turn. But essentially, you turn on false colour, select one of the four areas you want to isolate, for example, skintones, and make adjustments to your exposure, temp and tint controls until the skintones are illuminated in the false colour.

You can learn more about these tools in the official guide.

CineMatch – An Editor’s Review

If you’re someone who spends a lot of time grading in your video editing software of choice, then CineMatch, just as a grading UI, likely represents a decent upgrade to the native feature set you have at your disposal. (Unless you’re in Resolve of course!)

And when it comes to the finicky process of actually getting shots to match, even when they originated from the same camera, I personally need all the help I can get! So being able to leverage CineMatch’s database of sensor characteristics and colour science magic, is well worth the price of admission to me, both in terms of time saved, frustration eliminated and results delivered.

One of the nice things about CineMatch’s colour pipeline is that it operates on your log footage, even if you’ve already applied an output transform, so you can merrily crank up your controls, without worrying about clipping in your shot.

The CineMatch workflow guide also recommends applying the CineMatch plugin to your A-Camera footage, the one you’re matching ‘to’ so it doesn’t need to be matched to anything itself, as:

The Primary Corrections in CineMatch are customised to the response curve of your camera, so you will get more accurate and intuitive results with the CineMatch controls than the generic sliders in your editor.

Cinematch Workflow Guide

As you can see in the image above, this gives you some distinct advantages over using the Premiere Pro native Lumetri plugin, including (to my eye) a much richer depth and more natural interpretation to the colours even with no initial adjustments. For example, look at the tone of the turquoise wall behind the motorbike.

With a bit of tweaking I was able to get nice looking results on both clips, but it was also more apparent just how differently the log controls operate, compared to the Lumetri sliders, when you switch back and forth between them.

This might take a bit of getting used to, if, like me, you’ve spent a lot of time grading in Lumetri.

As part of own stress-testing, I tried to match a multi-camera shoot that involved some really badly shot footage (massively incorrect white balance, under exposure) and a reasonably well shot log image. In this tricky situation CineMatch’s workflow and controls certainly helped me get a much nicer looking image than I had managed previously with Lumetri alone.

Ultimately the fluidity and responsiveness of the CineMatch controls was really enjoyable to use, and felt much more fine-grained that the reactions from working in the Lumetri colour panel.

The UI also looks nicer too!

In conclusion, adding CineMatch to your toolkit will likely be a smart decision, especially if you’re used to handling a lot of different footage from a lot of different cameras, which happens all the time if you’re a freelance editor like me!

Save 10% on CineMatch with the discount code “ELWYN” or download their unlimited free trial here, and test it out for yourself.

Tips and Tricks for CineMatch

Here are some of the best tutorials I could find to help you add a bit of polish and depth to your use of CineMatch.

This tutorial from CineCom does a good job of covering more than the basics of CineMatch and demonstrating how well it matches the footage from four different cameras – in a split screen test that I failed to identify the ‘real’ RED camera footage. (Test yourself!)

They also have a great tip for using CineMatch to match skin tones across your cameras.

Using CineMatch in DaVinci Resolve

Sidney Baker Green does an excellent job of demonstrating how to use CineMatch in DaVinci Resolve and shares some great tips for using Resolve’s feature set to finesse your final image.