Editing 360 and VR Video

- Understand the essential terms, concepts and technology for VR

- Learn VR’s post-production pipeline in Premiere and FCPX

- Does VR narrative filmmaking have a future?

Virtual Reality (VR) games, video and other immersive experiences may well be the future. It’s a technology and an medium that’s picking up quite a bit of steam of late, with many big names buying in for billions of dollars. Even though it’s been around for nearly 30 years, VR is coming of age.

This post should give you a pretty good schooling in the fundamentals of VR and 360 video, the various concepts you need to understand and some valuable resources to help you dive deeper into the topic if you wish. I’m not going to cover the cameras much at all, this is a post production blog after all, but you’ll get a flavour of that side of things from some of the talks anyway.

The post will also cover editing VR video in Premiere Pro and FCPX as well as a few thoughts on the creative challenges of telling stories in a compelling fashion, in this unprecedented medium.

Understanding VR Video Basics

Neil Smith from the Lumaforge VR lab delivers an amusing and quick-fire overview of the world of VR and 360 storytelling in this first part of a presentation delivered at a recent LACPUG evening. If you watch nothing else, watch this as it lays a solid foundation for understanding what all the fuss is about and where we are in the technological and creative development of VR.

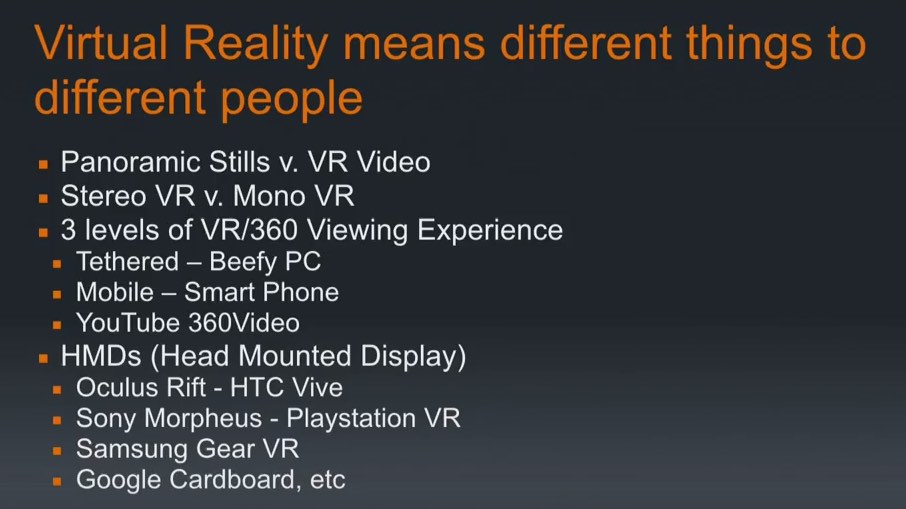

In his presentation Neil helpfully dissects all the different things that VR means to different people. Even in the title of this post I’ve called it two different things (360 video and VR video) so what is the difference between ‘Full’ VR and VR/360 Video?

The dividing line is currently between the traditional concepts of video games and video. Full VR is generated live and is responsive to the users interactions, like a video game. VR video is a 360 sphere of video that allows the viewer to look where they like, but thats the limit of their interaction with it. So for the purposes of this post 360 video and VR video are effectively the same thing.

Stereo VR vs Mono VR is the same difference as that between a 3D movie and 2D movie. For more on stereoscopic VR check out this post from 360Labs.

The whole goal of the best VR is what Neil terms ‘Presence’. That you are so immersed in the experience that your brain tricks itself into believing you are really there. Bad VR makes you vomit. So if you thought the headache from 3D glasses was bad, just wait for some shoddy VR.

In fact some people (see this Wired article) would prefer it if we didn’t call 360 video, VR at all.

In the short term, 360 video offers a relatively cheap bridge to the new medium. It’s fast. You can draft off of the many existing player infrastructures and creating 360 video adds just a few steps to the existing toolchain for video production. It’s easy. Because you can swipe your finger on the screen to explore a 360 video, your potential audience isn’t limited to owners of VR goggles.

It isn’t really good, though. This is just the latest example of content creators shoehorning old formats into new technologies. Like magazines and encyclopedia delivered on CD-ROM or mobile apps that were nothing more than wrappers for websites, 360 video ultimately will be supplanted by native VR content that embraces the medium and delivers new experiences impossible to recreate outside of VR. And they won’t make you feel like you’re going to throw up.

https://vimeo.com/158518367

Alex Golner of Alex4d.com provides a brilliant walk-through of the entire VR production pipeline and all of the pitfalls and challenges involved, before demonstrating how to use Tim Dashwood’s FCPX VR editing plugin in this excellent Soho Editors presentation from BVE 2016.

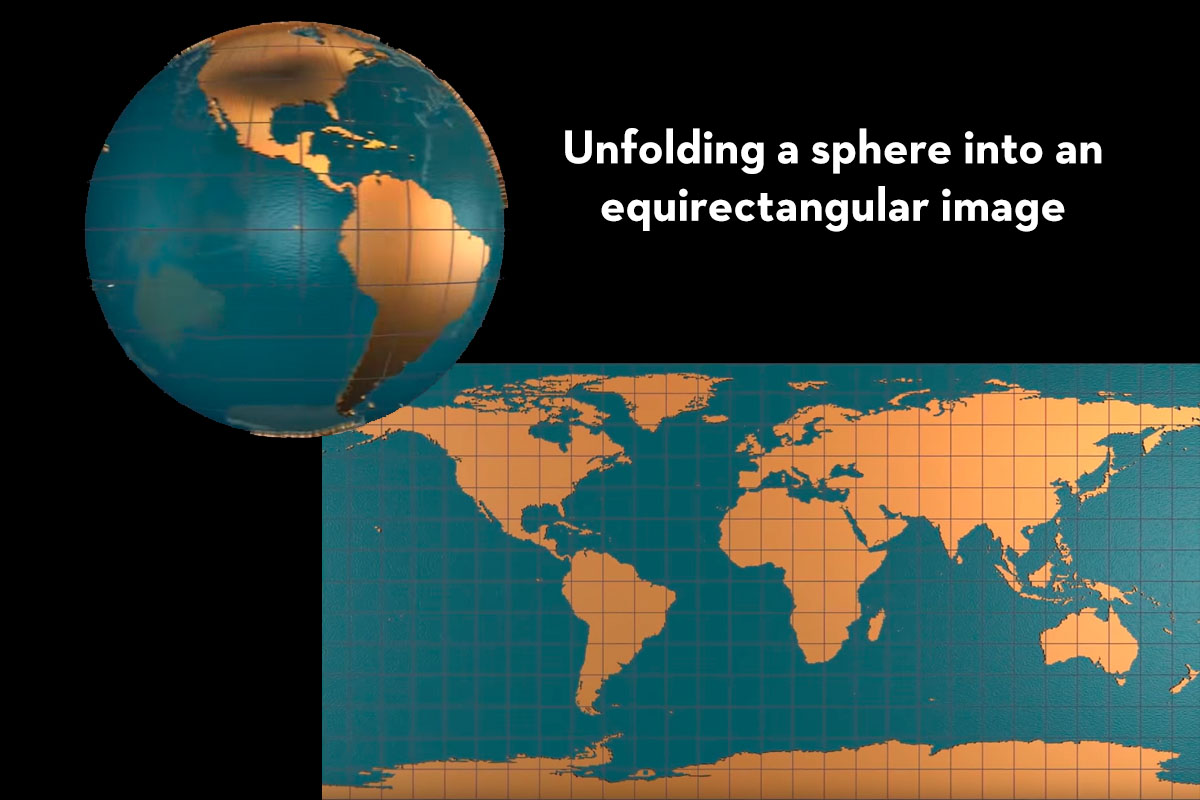

At 11.45 Alex explains what’s involved in the stitching and creation of equirectangular footage. This is what you would get if you took a spherical globe and unfolded it to lay it out flat, like a wall-chart map. The problems created by shooting with multiple cameras to form a 360 sphere are legion and include the need to stitch together those separate squares (portions of the map) into one seamless sphere.

You also have to figure out where to hide the crew, lights and other gaffs, which leads to a lot of compositing. Stitching seems to be the real hurdle currently to easily creating a seamless VR experience.

Alex also points out that most VR video is shot at 4K resolution (4096 x 2048) and above resulting in an effective viewing resolution of 720p through the head mounted display. So you need the horse power of 4K but you only get to see 720p at any one time.

Alex’s entire presentation is well worth a watch and provides a great insight into the world of VR from an editors perspective.

You should also check out Alex’s short but informative VR FAQ here. He makes an interesting comment that maybe some VR won’t even be a full 360 of seamless video.

Q. Why isn’t this FAQ called ‘360° Video FAQ?’

Although the vast majority of VR video today is about capturing, modifying and experiencing a full sphere of video, it is likely that there will be many uses of fractions of video spheres.

For now most people experiencing VR video initially look all around the sphere: up, down, behind. After that initial exploration, the spend the vast majority of their time hardly ever looking behind.

Single fisheye lens cameras can now capture as much as 235° of the video sphere. This means the whole stitching process can be avoided. You don’t have to leave un-captured 125° (or 180°) rest of the video sphere dark. You can use post production tools to composite stills or motion graphics that add to the experience: “to get more information, look behind you/down/up.”

At just over 12 minutes it’s hard to beat this video as effective primer on the nitty gritty of the kind of VR we’ve been talking about so far. Michael Kammes 5 Things episode on VR/360 video provides a fine explanation of the current state of affairs when it comes to answering the questions – What is VR? How do I shoot VR? How do I edit VR? How do I view VR? and What’s the future of VR?

Michael offers several fistfuls of great practical tips on creating your first VR video, including many helpful visual explanations of the key terms such as stitching equirectangular footage. Well worth a watch.

Lastly, it’s worth noting that, as far as I know, when it comes to remote client review services (check out this detailed comparison post for all the main players) Lookat.io is the only one to currently support 360/VR video. So if you’re client isn’t in the room with you, they might be the best platform to share client review videos on.

You can of course use YouTube and the Google Chrome browser for viewings, but it won’t have the client feedback capabilities that LookAt provides.

VR Gear – Head Mounted Displays (HMD)

There are numerous VR head mounted displays (HMD) available to buy for a wide variety of budgets and technological partners, here are four of the main ones and soon to be joined by the Playstation VR headset. These Head Mounted Displays are the ones most commonly referred to during the presentations included in this post and I won’t go into too much detail here.

The Oculus Rift is the main player and probably the main ‘household name’ other than Google Cardboard, when it comes to VR Gear. It doesn’t hurt that Facebook bought them for $2 billion before they’d even shipped a product.

Oculus Rift’s advanced display technology combined with its precise, low-latency constellation tracking system enables the sensation of presence.

Of all the HMD’s listed here it’s the most expensive at $805/£899, but it’s also the most advanced, and designed for ‘Full VR’ computer games and experiences, where as the others are more geared towards VR Video. The Oculus Rift is also the only headset in this line up to incorporate audio.

You’ll need to hook it up to a powerful computer or games console, with some serious GPU hardware, via the HDMI cable. Check out this Wearable article for full breakdown of this desirable piece of tech.

Buy Oculus Rift on Amazon.com | Buy on Amazon.co.uk

UPDATE – I forgot to mention the HTC Vive which is $1000 and created in partnership with games developer Valve, and is Oculus’s main competition.

The Samsung Gear VR is an $89/£63 version of Google Cardboard and designed exclusively for Samsung phones. The principle is the same though in that you place your phone in the headset and view it through two lenses.

This is primarily aimed at 360/VR video viewers although you can of course play games and enjoy stereoscopic 3D on it, just to the level of the performance of a mobile phone vs a powerful games console or PC with the Oculus Rift or Playstation VR.

Buy Samsung Gear VR on Amazon.com | Buy on Amazon.co.uk

The Mattel View Master VR headset is probably the best thing to buy if you, or a member of your family, want to give this whole VR thing a try for the first time. As Alex Golner helpfully pointed out in his talk, if your kid drops this, they’ll just break the plastic and not your smartphone.

At $17/£16 this is probably the best trade off in terms of functionality and cost. It’s sort of surprising that it doesn’t come with a head-strap, but hey ho, it’s pretty cheap. As with Google cardboard and the Samsung Gear VR you just clip your smartphone into the goggles and you’re all set.

Buy Mattel View Master VR on Amazon.com | Buy on Amazon.co.uk

Given that the price is effectively the same as the Mattel View Master VR*, I’m not sure why you would want to go for the Google Cardboard over the plastic counterpart, but this is the original device as hacked together by Google – in part as a joke at Facebook for spending $2 billion on something they could approximate for a couple of bucks.

This version isn’t actually made by Google, but it is the best seller for this kind of thing on Amazon. (*In the U.S. at least, in the UK it’s only £9.99)

Buy Google Cardboard on Amazon.com | Buy on Amazon.co.uk

VR In Depth

https://vimeo.com/168146156

In these following seminars you can dive quite a bit deeper into the world of VR, especially as it relates to VR filmmaking. In this first three part video series you can get a look at the Digital Cinema Society’s event focusing on VR which is effectively a masterclass in VR filmmaking from Richard Taylor II.

Richard covers both the technical and creative constraints of creating effective VR experiences and the best-practices from a craft perspective, based on his many years of working on numerous high end projects.

https://vimeo.com/168190709

In the second video Stuart English from Nokia demonstrates the Nokia OZO VR capture system and the talks through in detail the benefits of using a purpose built VR camera over a VR rig system.

It’s a pretty unique system and if you’re into shooting VR then this will be well worth a watch, if not to buy an OZO, then to understand some of the deeper technical issues and solutions in modern cameras across the board, and in VR.

As Stuart describes it, using a system like the OZO eliminates many of the difficulties in post-production created by VR camera rigs and when you only have to transfer one card the DIT workflow is much easier!

Oh, and it only costs $60,000, batteries and storage are extra.

https://vimeo.com/168146158

In part three Richard Taylor II returns to talk about simulations, virtual theatre and panoramas. If you’ve got the time for it, it’s an eye-opening tour of the current world of VR and similar technologies and entertainment experiences.

AOTG.com has a brilliant guide to effectively creating your first VR video in this article from Kenzie Audette, a post/production assistant at Frontline. (Any relation to Steve Audette I wonder?)

In the spirit of helping other journalists and filmmakers dive into this emerging medium, I want to break down the good, the bad, and the ugly of virtual reality – as well as some exciting new technology coming down the pipeline.

For the last year at FRONTLINE, the investigative documentary series on PBS, we have been creating 360 VR documentaries. We’ve transported viewers to the heart of the fight against Ebola, taken them on a critical mission to deliver food in South Sudan and offered a rare on-the-ground look at what’s left of Chernobyl. This past Sunday, we released our latest 360 film – Night of the Storm – the harrowing story of a family hit hard by Superstorm Sandy.

Kenzie covers everything from camera options, common problems and creative solutions to creating engaging VR content, and it’s a great read.

One way to get positional audio in your video is to use a game engine to connect sounds to “objects” in a video. Using some post processing effects like reverb can help simulate the effect of 3-D audio. This seems like an easier solution to making VR audio a reality. Very similar to the method used to make 3-D audio in video games, the technology is already here and can be repurposed.

In this three part Visual Effects Society (VES) presentation on VR Post Production, you can learn a lot about the current state of the technical and creative possibilities when it comes to VR post. There were several different presentations during the day, moderated by Scott Squires, Academy Tech Award Winning Visual Effects Supervisor and Developer.

- Mariana Acuña Acosta, Media & Production Creative Manager, The Foundry, describing the latest set of tools that The Foundry is working on to help solve the day to day problems of working with VR.

- Gawain Lilliard, VFX Supervisor, The Mill and his team, presented the methodology and techniques used to deliver the Google Spotlight live action VR project “Help”, directed Justin Lin, of “Fast and Furious” fame.

- Jason Schugardt, Head of CG/VFX Supervisor with MPC LA will share the tools and techniques used to create the live action/CG VR adventure, “Goosebumps”. Goosebumps VR is a stereoscopic special venue experience that premiered in conjunction with Sony’sfeature film in select theatre lobbies.

- Chris Healer, Founder and CEO of The Molecule talks about the ins and out of the stitching, rendering, and visual effects workflow on Doug Lyman’s “Invisible”, the first episodic action-adventure series created in immersive virtual reality.

Editing 360 and VR Video in Premiere

When it comes to editing your VR footage in your NLE there are professional plugins for both Premiere and FCPX that will help you get the job done. In fact, in the latest release of Adobe Premiere (June 2016) Adobe have added in a VR preview viewer, working with stitched equirectangular media for both stereoscopic and mono VR video.

Rocketstock has a simple step by step guide to editing 360 video in Premiere in this blog post, covering ingest to export.

Using a third-party metadata tool used to be the only solution for exporting VR footage to YouTube and Facebook. Now, thanks to Adobe’s 2016 update, you can apply the necessary meta data directly in Premiere.

To export your footage, select your timeline and hit Command+M or navigate to File>Export>Media. This will pop up your export window. Once you select your desired codec, navigate to the Video tab and make sure the VR Video checkbox is selected.

In this older video from 2015, you can enjoy a good lesson in a full Creative Cloud Suite workflow for 360 video using After Effects, Premiere and Photoshop.

https://vimeo.com/157744603

When it comes to professional level plugins for Premiere Pro Mettle seem to be the company of choice, although Tim Dashwood’s 360VR Toolbox also works in Premiere and After Effects, he seems to be better known to the FCPX community.

In this 17 minute tutorial from Mettle’s Charles Yeager, you can walk through an overview of the Skybox VR tools for Premiere Pro, which are the same After Effects tools ported into Premiere.

Charles covers correcting your footage with the pan and tilt and roll functions, as well as applying the 360 blur, glow and sharpen effects. Later on in the tutorial you can learn how to correctly insert 2D logos and text into your 360 video. Mettle’s Premiere Skybox 360/VR plugin costs $189.

For even more Mettle tutorials check out this page on their site.

https://vimeo.com/151866101

Mettle also have a free VR player which allows you to preview your work in Premiere or After Effects, via an attached Oculus Rift of HTC Vive. This looks like an extremely effective way to experience your 360 footage and jump back and forth to make tweaks, without having to take the headset on and off.

In this detailed tutorial Tim Dashwood walks through the truths and myths of what is possible in the new Premiere Pro update when it comes to VR and what isn’t. He also demonstrates using his VR plugins in Premiere Pro.

Editing 360 and VR in FCPX

https://vimeo.com/164771652

Tim Dashwood’s FCPX VR plugins are the go-to toolkit for anyone cutting VR in FCPX, potentially because it’s the only option right now, as far as I know. In a similar presentation to his BVE Alex Golner walks through a primer on VR and working in FCPX with the 360VR toolkit. The tools work in essentially the same fashion in Premiere too.

Tim Dashwood rounds out the presentation with a quick description of some of his newly update FCPX VR plugins including the ability to use stereo 360 particle emitters as well as tools to deal with ‘the stereoscopic problem’ in VR. Tim’s passion and expertise are self-evident in just a few minutes!

Alex makes a good point that the Dashwood Toolkit now comes in two price points, the fully fledged 360VR Toolkit for $1119 and the 360VR Express version for $119. The only difference between the two is that the Express version doesn’t work with stereoscopic 3D, which seems like a great deal to me! There are also free watermarked demos to download too.

Both of which are available via FXFactory, which once you install it, opens up your edit suite to the possibility of numerous free plugins as well as a vast collection of useful post plugins, effects and transitions too.

In this 3-part MacBreak Studio series the boys from Ripple Training walk through the world of VR and FCPX in about 40 minutes. In the first episode Mark Spencer and Steve Martin cover much of the same ground as Alex Golner in his presentations, but in their own inimitable fashion and provide specific details on working with the Theta S from Ricoh.

In the second episode Mark and Steve discuss why you might want to shoot in 360 and deliver a flat image. As part of this episode Mark demo’s the Revolve 360 plugin from Sugar FX, also available from FX Factory and more of Tim Dashwood’s plugins.

What’s great about this episode is that it demonstrates that you can take VR in unique directions if you’re willing to think creatively.

The third episode of the series demonstrates some of the higher-end post production considerations when working with stereoscopic equirectangular footage, from a camera like the $60,000 Nokia Ozo and Tim Dashwood’s 360VR toolkit (the expensive version).

The Foundry’s Cara VR Plugin

https://vimeo.com/161916996

As a quick aside if you’re working at the higher-higher end of things The Foundry have created a VR toolset for Nuke called Cara. Starting at £3,295/$4,295 it’s aimed at solving some of the complex challenges of stitching and compositing in stereo VR.

Studio Daily has a nice little article about it here, which includes this video featuring New Deal Studios.

GPU acceleration allows Nuke’s compositing tools to work directly with 360-degree footage, with a Spherical Transform node making it easier to work with different projection types. And projects can be previewed on HTC and Oculus headsets directly from the Nuke Studio timeline.

Features include:

- Camera Rig Solving

- Stitching

- Colour Correction and Matching

- 360 tracking and stabilisation

- VR compositing workflows

- 360 stereo rendering and slit-scan shader

- Head Mounted Display Review Output

The Craft of VR Filmmaking

Some of the questions that VR throws up from a creative storytelling point of view is how do you work within a frameless paradigm? How do you control where the audience looks and shape their emotions? How does editing – jumps in time, space and cross cutting – work in VR? If these concepts even translate into VR filmmaking?

In this LACPUG meeting video Matt Gratzner, VFX Supervisor of New Deal Studios, talks through the his process for creating his Cinematic Narrative VR films. New Deal Studios were traditionally a miniture effects house, most recently working on Interstellar, but have sinced expanded into VR.

As part of Matt’s talk he suggests that the most important thing to be creating right now, to sustain the VR bandwagon, is cinematic VR content which contains a really strong story – even if the stitching or tech side of things isn’t quite perfect – if the story is great, people will be hooked.

Youtube’s 360 video channel has 1.4 million subscribers with plenty of content, ranging in quality from great to terrible.

Google’s short film Pearl, demonstrates some answers to the questions I posed at the beginning of this section. Directed by Patrick Osborne the film is actually VR, in that it’s being created on the fly as you interact with it.

“When you’re immersed in a story, it’s all around you,” he said. “How do we tell you where to look? How do we prevent you from getting lost or missing an important part of the story?”

He showed a few techniques for guiding the user’s attention — changes in music, helper characters that bring you back the action’s focus, and so on. But the only real solution seems to be multiple viewings — and in order to accommodate that, it’s worthwhile for the director and team to make sure there’s content in all directions.

In this moving TED talk from Chris Milk, an ‘immersive storyteller’ he traces his own journey from music to music videos to VR, which he describes as “the last medium for storytelling, he says, because it closes the gap between audience and storyteller.” This TED talk also includes the world’s largest collective VR experience. (apparently).

Milk says VR will be the last medium because it removes the ‘translation gap’ between the authors expression and your experience of it. He suggests that we’re at year one of the VR experience and we need to move past the spectacle and into the storytelling.

It’s a powerful and helpful talk for anyone interested in the current ‘state of the art’ and it’s embryonic future.

Alex Golner shares some helpful notes on lessons in sound design from a podcast on VR Video Games. As with filmmaking the sound design and it’s environmental impact.

Although VR audio tools have yet to be integrated into NLEs, audio experts (and video editors who spend much of their time refining their soundtracks) should consider how audio design is different for VR.

Those designing audio for VR games are probably further along in coming up with what makes VR different…

[23:33] ‘Dynamic range is back – we’re not crushing everything any more’ – adding heavy compression doesn’t work – it just makes evreything loud. ‘VR audio will be pretty uncompressed.’ Prepare for the fact that different audio soundtracks work for different playing environments. Most VR experiences will be in quiet environments, but some will be in noisy places – which will need compression to punch through.

AR vs VR

#VR will be a mildly successful precursor to ubiquitous #AR – Barry Sandrew @MovieProducer https://t.co/H5FdpnoLUz pic.twitter.com/wtkGGnBh3L

— Alex Gollner ? (@Alex4D) July 7, 2016

For some people the future isn’t even in VR but in AR. Augmented Reality is what you’re seeing in Pokemon Go and it seems to be doing pretty well! It has added $9 billion to Nintendo’s value and has more active users than Twitter (in the U.S.) after just one week, with people spending more time in the app than Facebook.

It will be interesting to watch, and participate in, the trajectory of these two technologies and their creative storytelling potential.

Pokémon Go adds $9B to Nintendo’s value, global rollout continues this week https://t.co/PG1kq1OEhp pic.twitter.com/SHySKha2QB

— TechCrunch (@TechCrunch) July 11, 2016

Jumped on the @Pokemon Go Bandwagon…tons of fun with a 4 1/2 year old…forget VR, AR is awesome #PokemonGO pic.twitter.com/YYojZGuTPH

— Brady Betzel (@allbetzroff) July 11, 2016

Pixar’s President, Ed Catmull, also had some interesting things to say about VR and AR in this Studio Daily interview. He’s pretty convinced VR isn’t the way to go for narrative storytelling, but he hedges his bets too. Either way, he created Pixar, so he probably knows what he’s talking about!

What’s your take on virtual reality?

As you know very well, it’s been around for 30 years. The only thing that changed was that they got rid of the lag time, which led to a perceptual difference in what we experienced. It didn’t change the other problems, but it made it possible to think about new possibilities. I don’t know what all of the uses are, but I have a rough idea.When you look at the full scope — at shared experiences, games, explorations of worlds, new stories and as VR as a tool in industries beyond ours — I find that exciting. But storytelling in VR, that’s a hard problem. I’m not skeptical about VR in gaming, theme park experience, shared experiences across the internet, in layout and design for animation, and in AR. But I am skeptical about theatrical storytelling in VR.

Why are you skeptical about theatrical storytelling in VR?

One reason. Theatrical storytelling does not take place in real time, and almost no one realizes this. Good editors know they’re messing with time. Someone starts walking across a room, you cut, and he’s at the door, and the audience never notices. You can do that in film. VR is different. So I’m expressing this skepticism. But we just funded research groups at these R&D centers, and the people there think I’m missing something. If they prove me wrong, that’s awesome. I never mind being proven wrong. And the truth is, if they can’t tell stories, they’ll come up with something else. We can’t lose.

Incidentally, throughout my career there have been several times that I was skeptical of the approach that some suggested, but I wanted them to prove I was wrong. When I was doing my original rendering research and animation research at [the University of] Utah, [professor and computer graphics pioneer] Ivan Sutherland said, “I think this isn’t going to work.” He was an extremely smart mentor, who was skeptical. I viewed his objections as problems I needed to solve. Here’s a problem. Let’s go solve it.

Larry Jordan intervied AMD’s Director of Virtual Production, James Knight about his thoughts on AR and VR, who also had some helpful comments.

I started our conversation by asking James what he thought about the long-term prospects for both these technologies. “Both VR and AR will be successful,” he replied, “though AR will be more common. VR allows us to escape our world – that’s its big benefit – while AR enhances our world.”

“At AMD, we create tools for technology, then let creative storytellers figure out the best way to use it.”

When I asked him whether VR will win in the long-term, James replied: “I don’t think it’s a question of winning. VR doesn’t alter filmmaking, it is an additional tool to enhance filmmaking. I think VR is part of the future, but not totally the future. Also, keep in mind that editing VR today is much harder than editing a traditional film.”

Hi

I need to insert 2D video into my 360 video so that the 2D is always shown on the screen in the same place (i.e. pinned to the top right corner) and not been rotated with the navigation of the 360 video. Is this possible? what software can help to do this?

Regards;